AI is rapidly entering health settings, but its integration raises questions about equity and trust. We examined the perspectives of patients who are historically underserved by healthcare—those most at risk from poorly implemented AI—to drive AI implementation that better meets their needs.

Through qualitative research with patients, we generated insights and design principles to improve AI implementation. Our findings provided healthcare system leaders with actionable guidance in pursuit of strengthening patient-provider relationships and driving more equitable health outcomes.

AI is rapidly entering health settings, but its integration raises questions about equity and trust. We examined the perspectives of patients who are historically underserved by healthcare—those most at risk from poorly implemented AI—to drive AI implementation that better meets their needs.

Through qualitative research with patients, we generated insights and design principles to improve AI implementation. Our findings provided healthcare system leaders with actionable guidance in pursuit of strengthening patient-provider relationships and driving more equitable health outcomes.

While AI offers significant potential to improve health outcomes and system efficiencies, its integration into primary care settings also raises questions about equity, trust, consent, and the future of patient/provider relationships.

To ensure that AI tools support equitable primary care, it is essential to understand how people experience and perceive these technologies in their healthcare — especially people who are not typically represented in tech product development, who have been historically underserved by the medical community, or who are typically less trusting of doctors.

We partnered with the Commonwealth Fund to conduct a qualitative design research study around the integration of AI technologies in primary care settings, with a particular focus on patient populations that have been historically underserved by and/or are mistrustful of the US healthcare system. Our research sought to identify how patients feel about existing integration of AI in primary care, identify language that allows individuals to best understand and consent to such integration, envision ways that AI-supported systems might eliminate gaps in the quality of care patients receive and enable them to be active partners in their care.

Based on our research, we developed insights and design principles, which we delivered to the Commonwealth Fund. The Fund is disseminating these findings to healthcare system leaders, policymakers, and AI technologists to inform the ethical implementation of AI tools in primary care— including decisions about AI tool selection, implementation, and patient communication

.

Participants engaged

Data points collected

Inquiry Areas

To explore these questions, we spoke with people in New York City and Southwest Virginia, prioritizing low-income individuals from populations historically underserved by or mistrustful of healthcare systems. In New York, participants were predominantly people of color; in Virginia, they were predominantly white. We recruited individuals who had attended at least one primary care visit in the past year so they could reflect on recent experiences with AI in healthcare. We also sought diversity in insurance coverage and familiarity with AI tools.

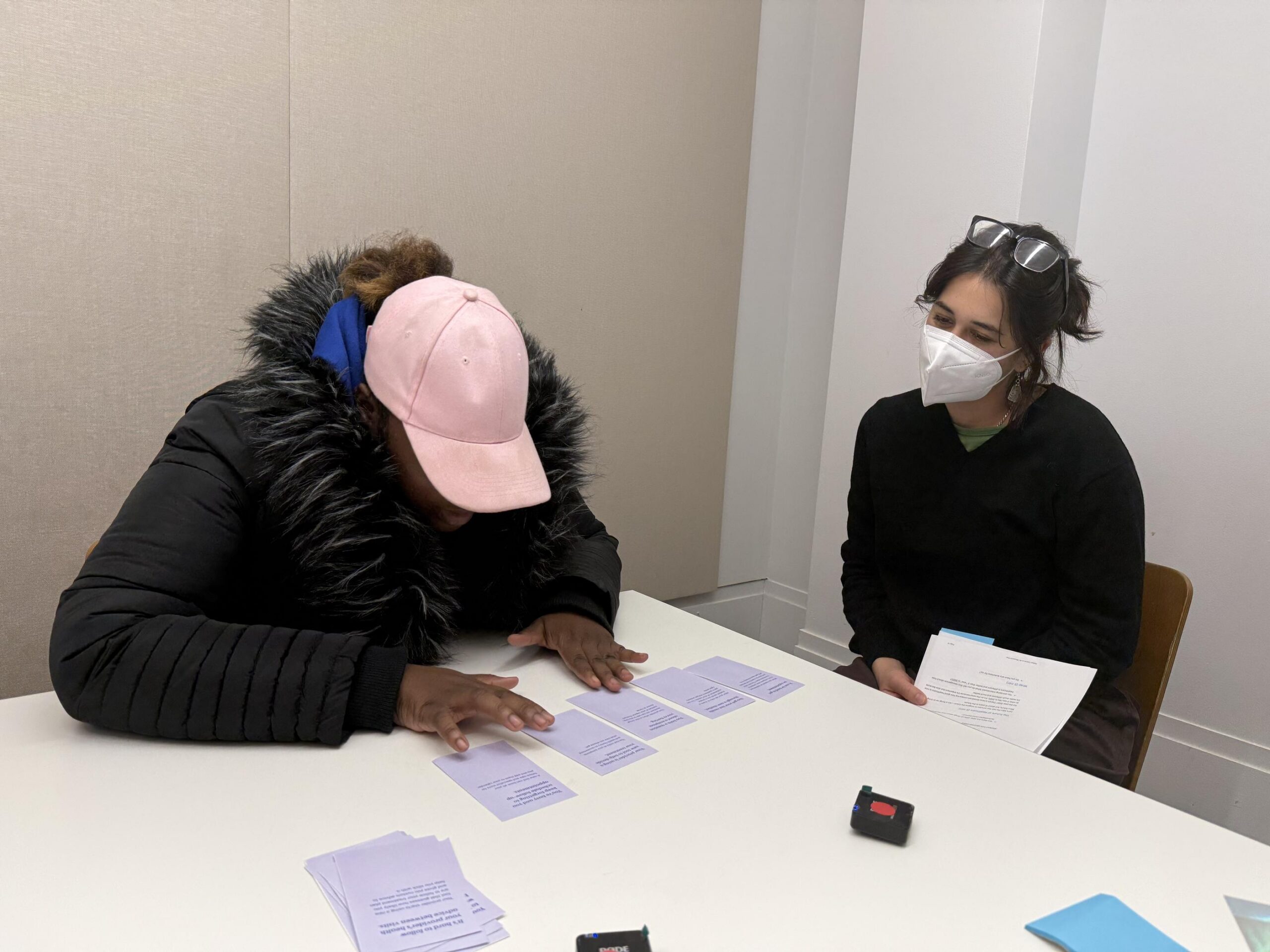

Using semi-structured interviews, we explored our inquiry areas and captured reactions to specific AI applications and clinical contexts. We also used design stimuli, a set of scenario cards representing potential uses of AI, to tease out participants’ perceptions and tradeoffs.

To complement our primary research, we compiled academic literature, professional journals, and reputable innovation news sources to fill gaps and provide additional context.

What We Heard

The videos below provide a preliminary overview of key moments from the field before synthesis or meaning-making.

We synthesized 320+ data points into insights organized across three categories: hopes, concerns, and needs.

A PPL researcher conducts an interview with a participant using design stimuli.

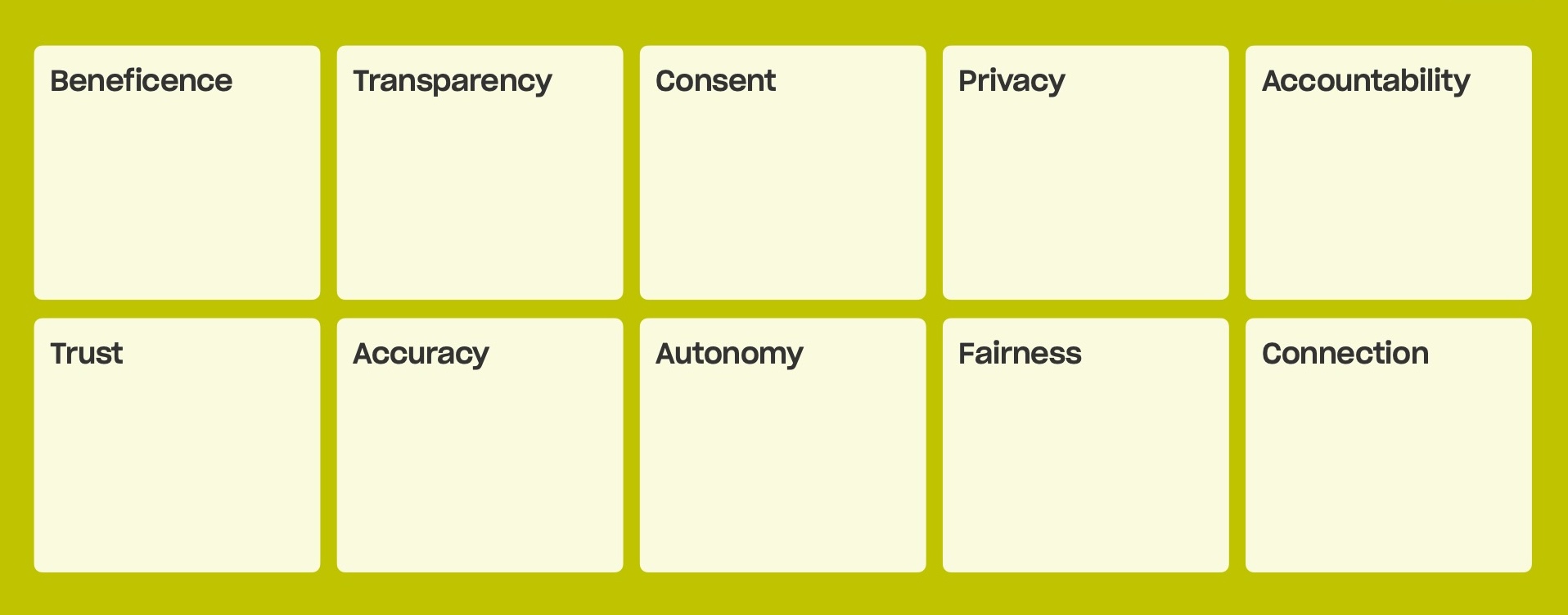

Drawing from these insights and established ethical AI frameworks, we developed ten design principles to guide the ethical implementation of AI in primary care. These principles are designed to guide health care system leaders as they make decisions about AI tool selection, implementation, and patient communication, with broader relevance for policymakers and AI technologists working to ensure AI serves patients needs rather than undermines them.

Numerous frameworks for ethical AI have been developed globally — from the OECD AI Principles to WHO guidance on AI in health care — with convergence around principles like fairness, beneficence, and transparency. Research consistently identifies a gap between these principles and the challenges of implementing them in practice. This work aims to bridge that gap by having patients tell us, in their own words, which principles matter most and what each looks like in the context of their own care.

Notably, nine of the ten principles reflect areas of convergence in the ethical AI frameworks with the exception of Connection. The relationship between patients and their providers emerged as one of the most important themes in our research, yet it falls outside the scope of most ethical frameworks. This finding underscores why patient voices are essential: not just to define how principles apply in practice, but to identify which principles matter in the first place.

Design Principles

These principles provide a shared foundation for consistent, intentional decision-making across teams and disciplines.

Consent: Patients want plain-language written materials they can review on their own time, paired with in-person conversations where they can ask questions — and they preferred a comprehensive consent form over a brief summary. Meaningful consent requires that patients can genuinely decline AI-assisted care; when that’s not possible, consent feels like a formality.

Accountability: Patients need to know that a human provider remains reachable and responsible for medical decisions, and worry that over-reliance on AI could lead to cognitive deskilling and diffused responsibility. When accountability is clear — including plain-language documentation of how AI is used, who oversees it, and how decisions can be contested — patients feel safer trying new approaches to care.

Trust: Patients trust their providers’ judgment more than they trust “AI,” and any introduction of AI should strengthen the patient-provider relationship rather than replace it. For lower-stakes decisions patients are comfortable with AI autonomy, but for higher-stakes ones they expect their provider to have the final say.

Autonomy: Patients report feeling powerless in healthcare systems and want more autonomy, particularly in decisions with direct personal impact like treatment choices. Tools that increase transparency — like patient portals — have made patients feel more empowered, and when autonomy is absent, patients turn to outside resources like consumer AI chatbots instead.

Fairness: Patients from historically marginalized communities expect AI to follow familiar patterns of exclusion, encoding existing biases and disregarding their perspectives — and they worry about AI displacing jobs. At the same time, many of these same patients are already turning to AI chatbots as a non-judgmental alternative, hopeful that AI might reduce the impact of provider bias and improve access to quality care.

Accuracy: Patients are aware of AI’s potential for errors and hallucinations, but are equally frustrated by existing inaccuracies in their care, such as misdiagnosis and insufficient examination. They’re hopeful about AI’s analytical potential, but only with robust human oversight and transparency about the limitations of any tools being used.

Privacy: Many patients feel general data privacy has already been significantly compromised and are willing to trade some privacy for better care — provided data use is transparent, de-identified, and serves a clear benefit to them or others. However, some patients remain wary, and those who feel in control of their data are more open to new tools and more likely to share sensitive information with their providers.

Transparency: Patients feel their current care already lacks adequate transparency, and want disclosure about AI involvement — its purpose, data use, error rates, known biases, and accountability structures — in detailed written form they can review at their own pace. They also want the conversational equivalent: providers who can answer questions and be a trusted source of information about how AI is being used in their care.

Beneficence: Patients are skeptical that AI in primary care is primarily for their benefit, and worry it will be deployed to increase efficiency and cut costs rather than improve care quality. They want honest, specific communication about how AI tools will help them — not vague claims about innovation — and expect that any efficiency gains translate into more provider time, greater access, and better outcomes.

Connection: Even patients who have had negative experiences in their medical care — feeling dismissed, judged, or misdiagnosed — don’t want their providers replaced by AI. Patients told us that good care is ultimately defined by a consistent, empathic relationship with a provider, and that well-designed AI could strengthen that relationship by freeing up more time for the interactions that make patients feel heard and cared for.

I just don't want to lose my real doctor. I just want a real doctor to always see me, talk to me, and answer my concerns. And I think that machine is not going to do that as well as a human being.

—Research Participant (Bronx, NY)

In February 2026, PPL delivered a final report to the Commonwealth Fund synthesizing insights and design principles from our research with 23 patients across New York City and Southwest Virginia. The report uses photos, quotes, and video material to help audiences hear directly from patients about their experiences and perspectives on AI in their primary care.

In the immediate term, the Commonwealth Fund will disseminate these findings so that patient insights inform decisions about AI tool selection, implementation, and patient communication. Over the medium and long term, these patient perspectives can be embedded more systematically into future grantmaking and health system decision-making around AI in primary care.

PPL is a tax-exempt 501(c)(3)

nonprofit organization.

info@publicpolicylab.org

+1 646 535 6535

20 Jay Street, Suite 203

Brooklyn, NY 11201

We'd love to hear more. Send us a note and we'll be in touch.

We are accepting applications for a Graduate Summer Intern until February 23, 2026.

To hear about future job announcements, follow us on Instagram, Twitter, Threads, and LinkedIn or subscribe to our newsletter.

Enter your email below to subscribe to our occasional newsletter.

Wondering what you’ve missed?

Check out our

The Public Policy Lab is a tax-exempt

501(c)(3) nonprofit organization.

Donate now to support our work; your

gift is tax-deductible as allowed by law.